# 3-2. 데이터 증강 (결함합성)

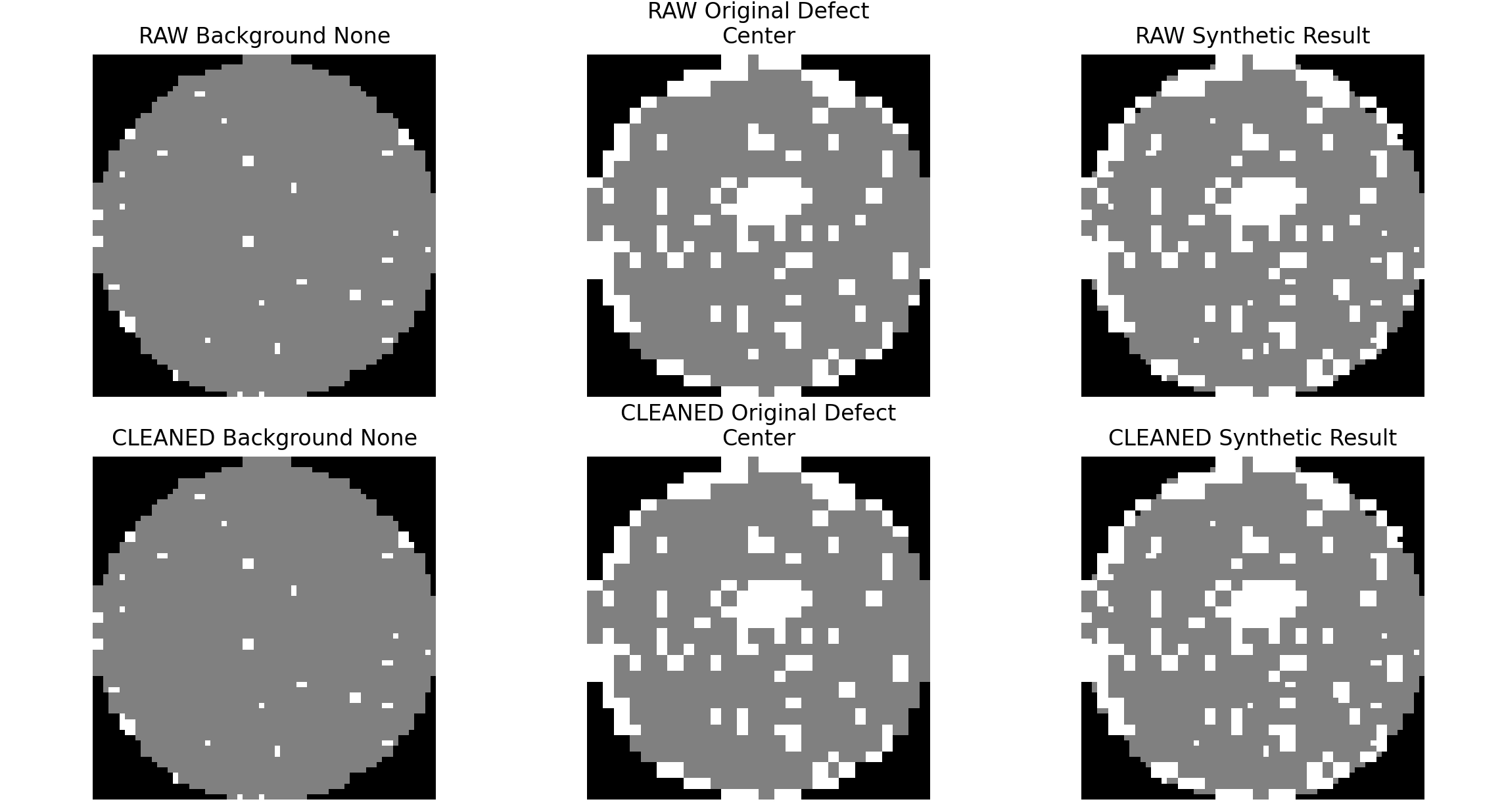

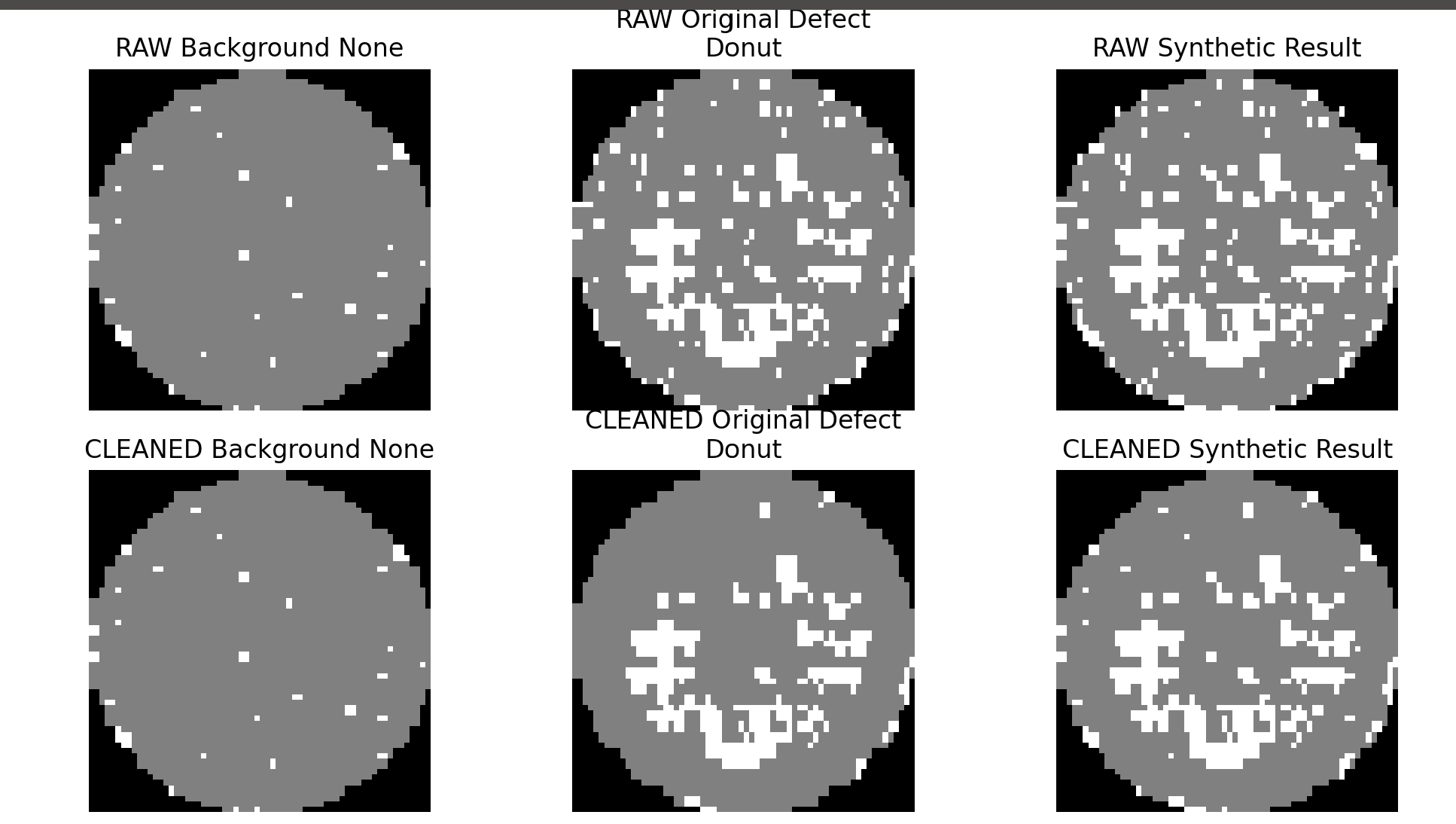

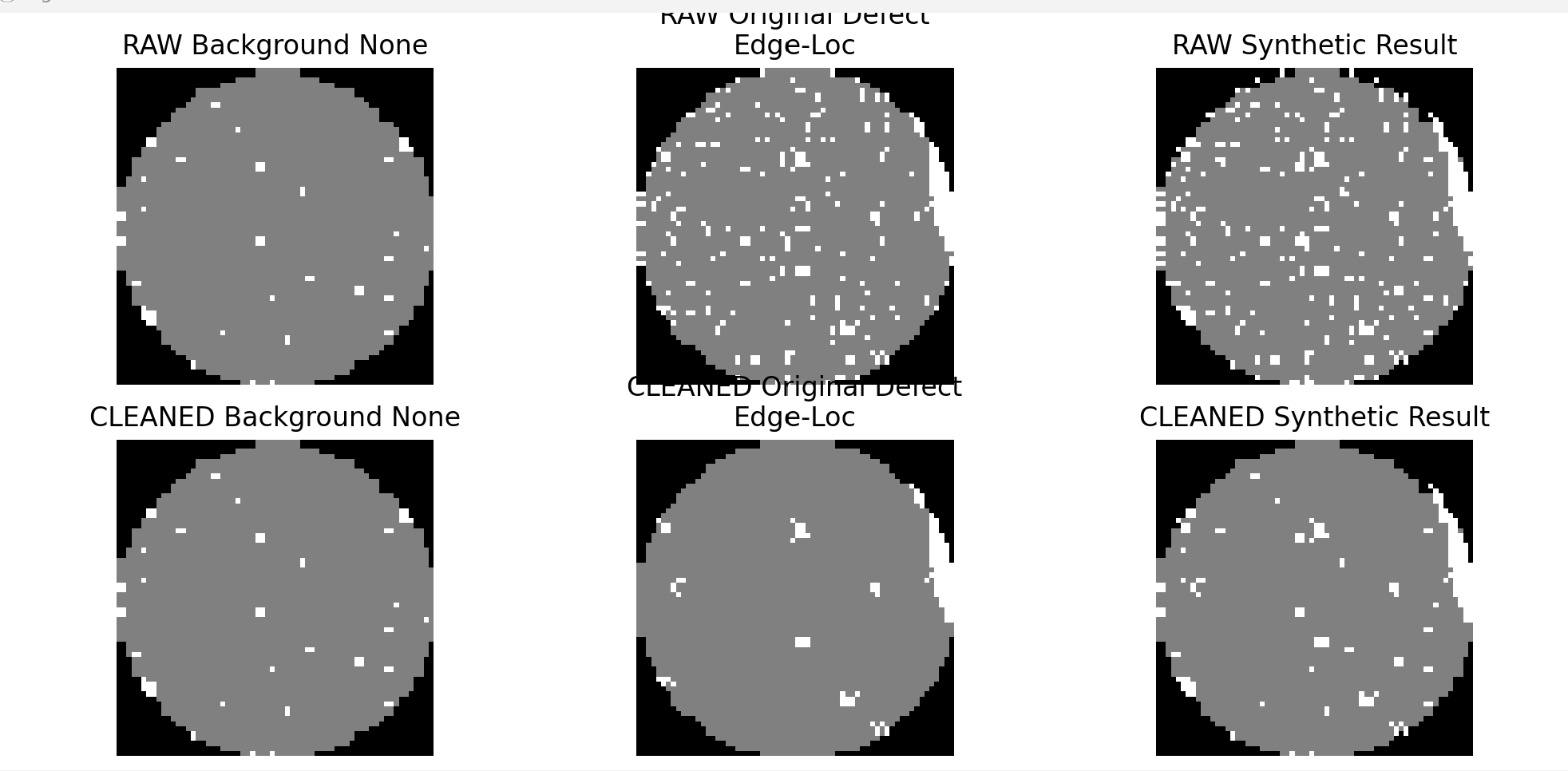

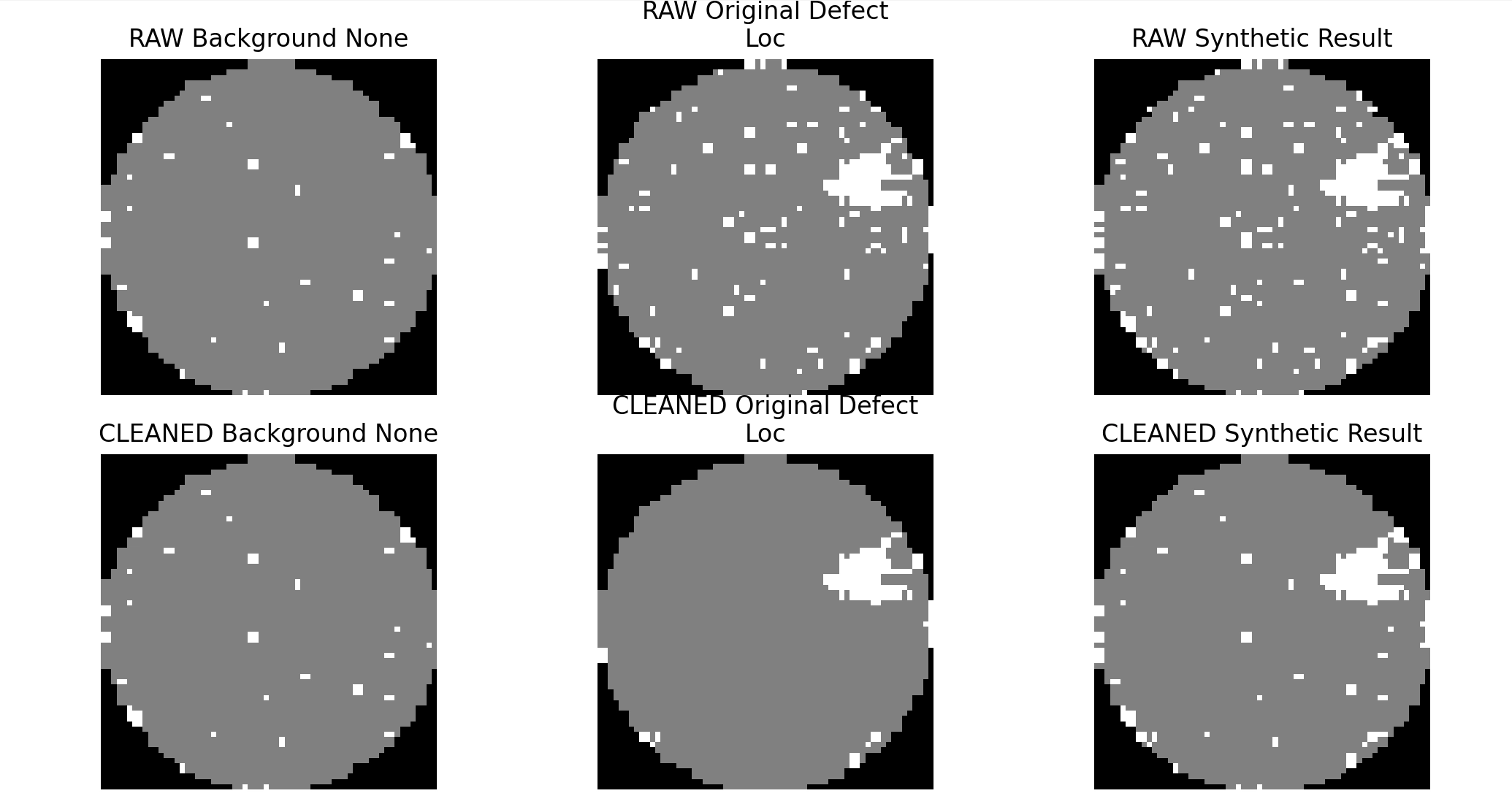

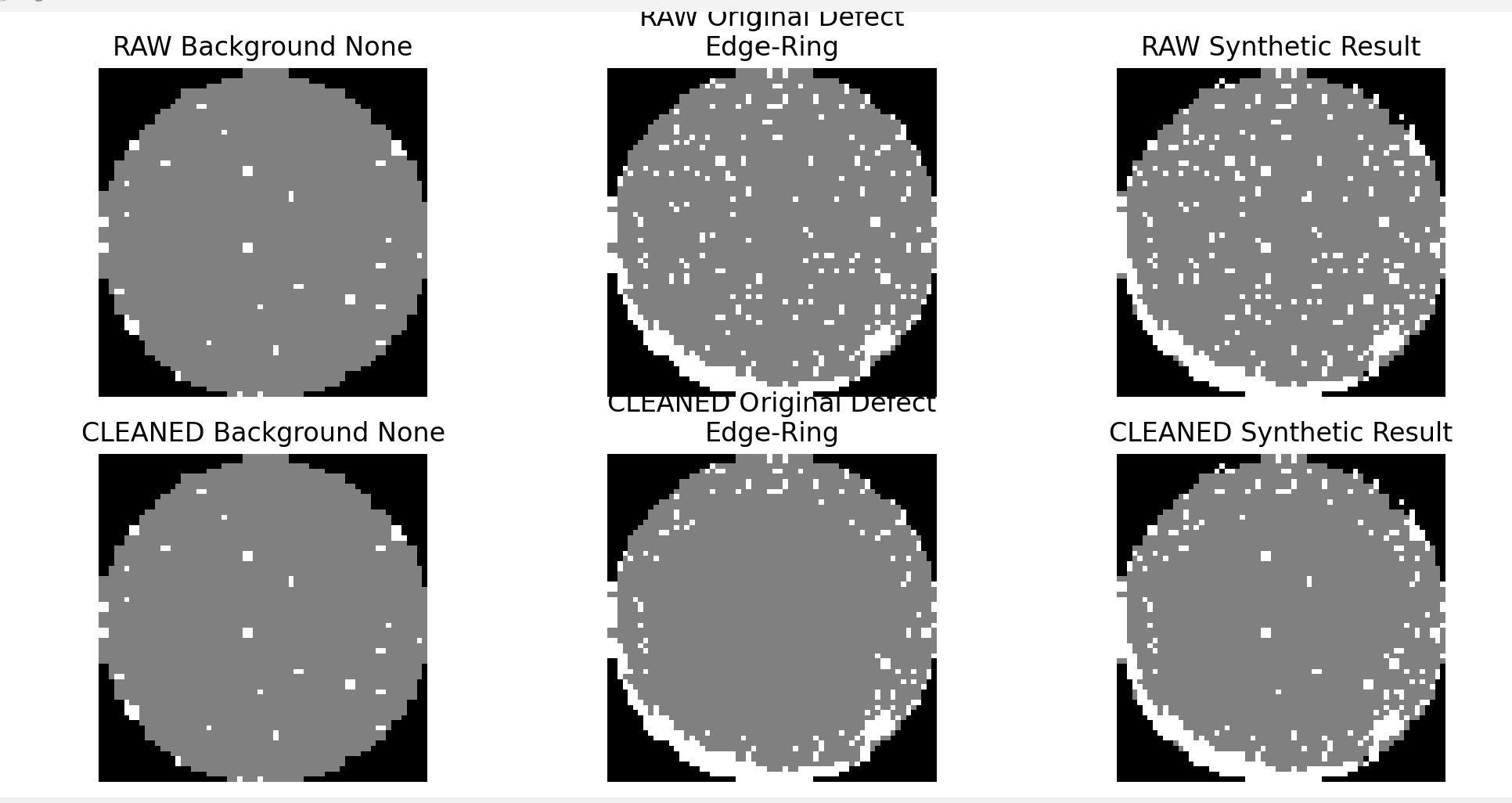

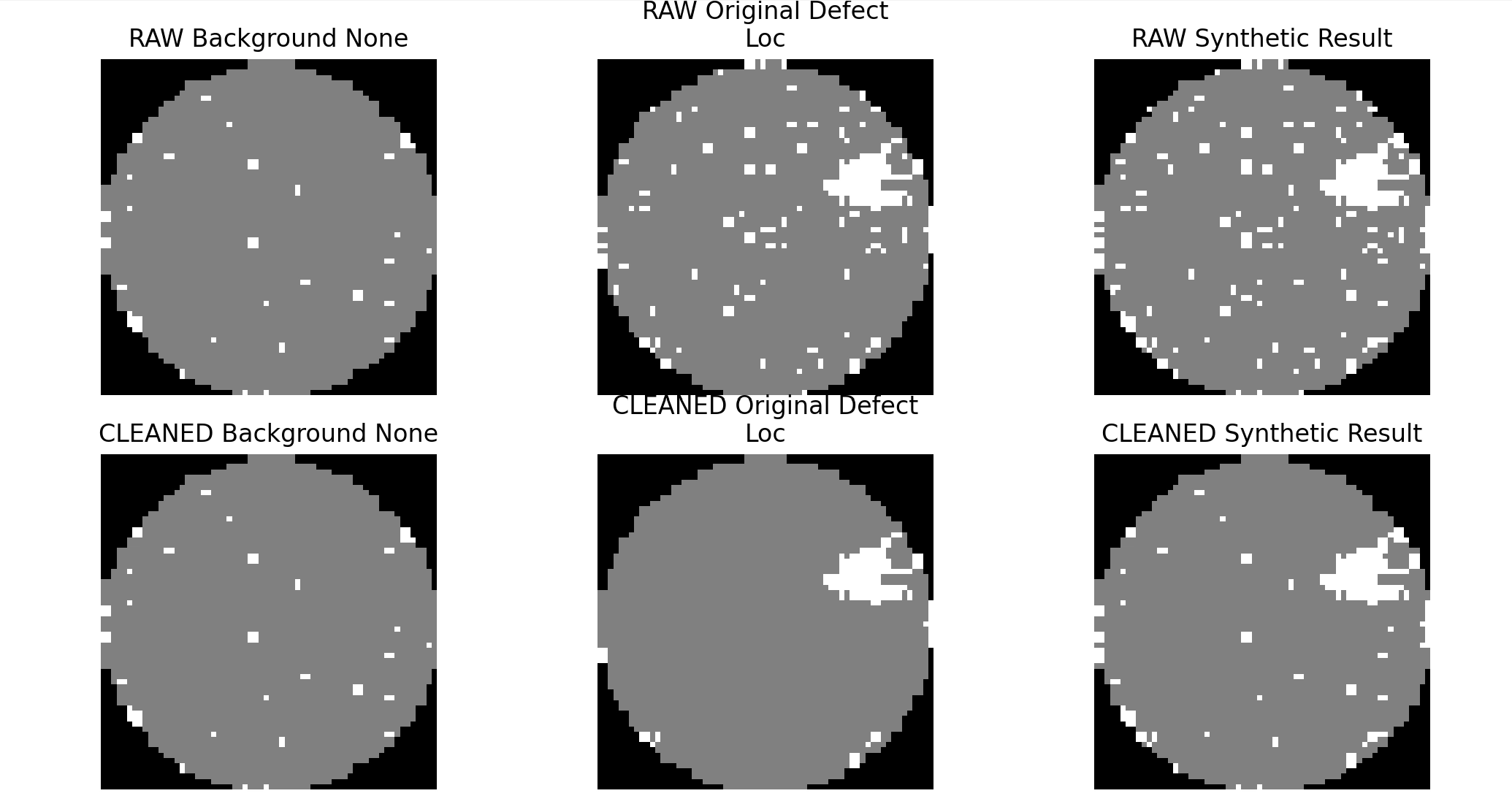

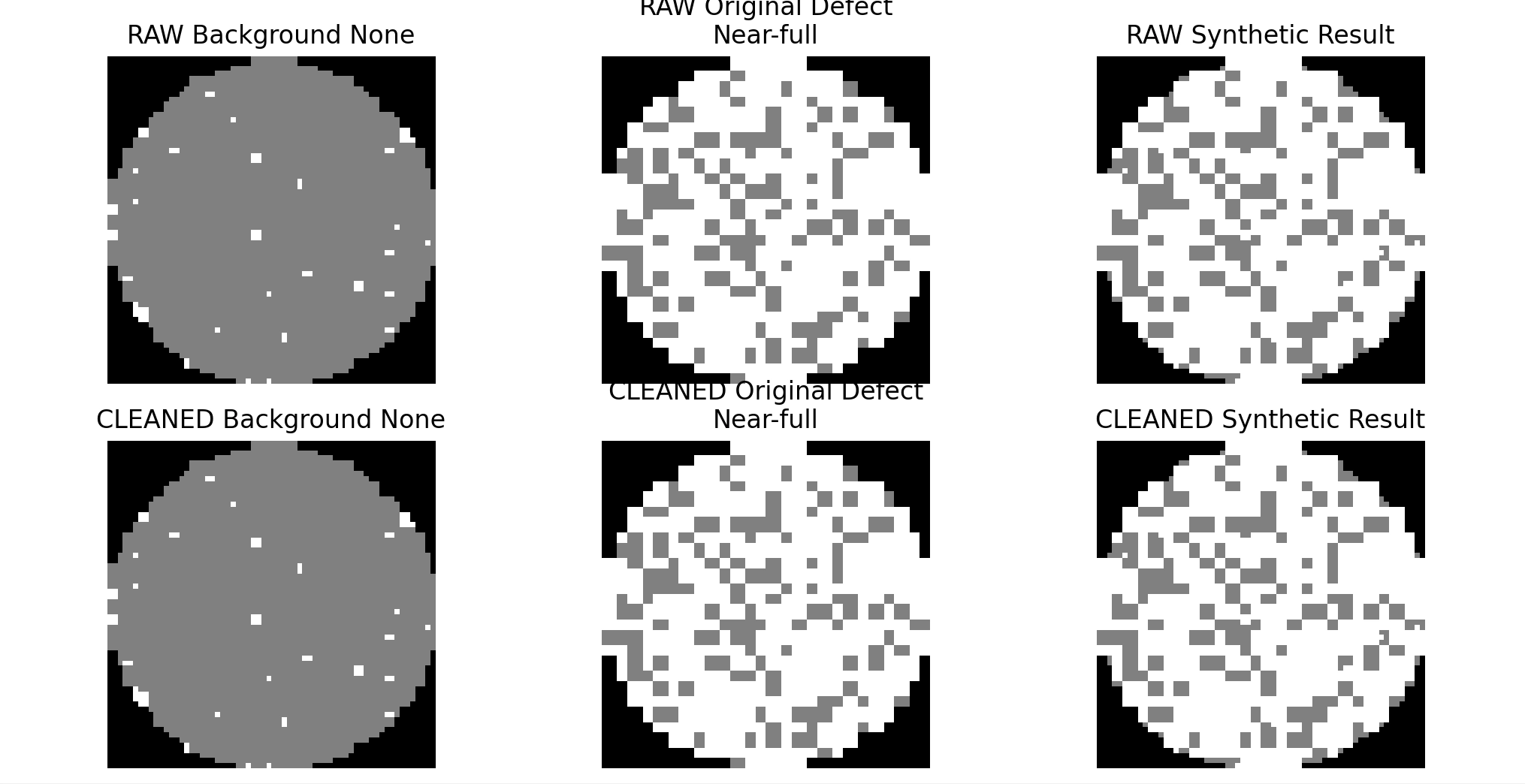

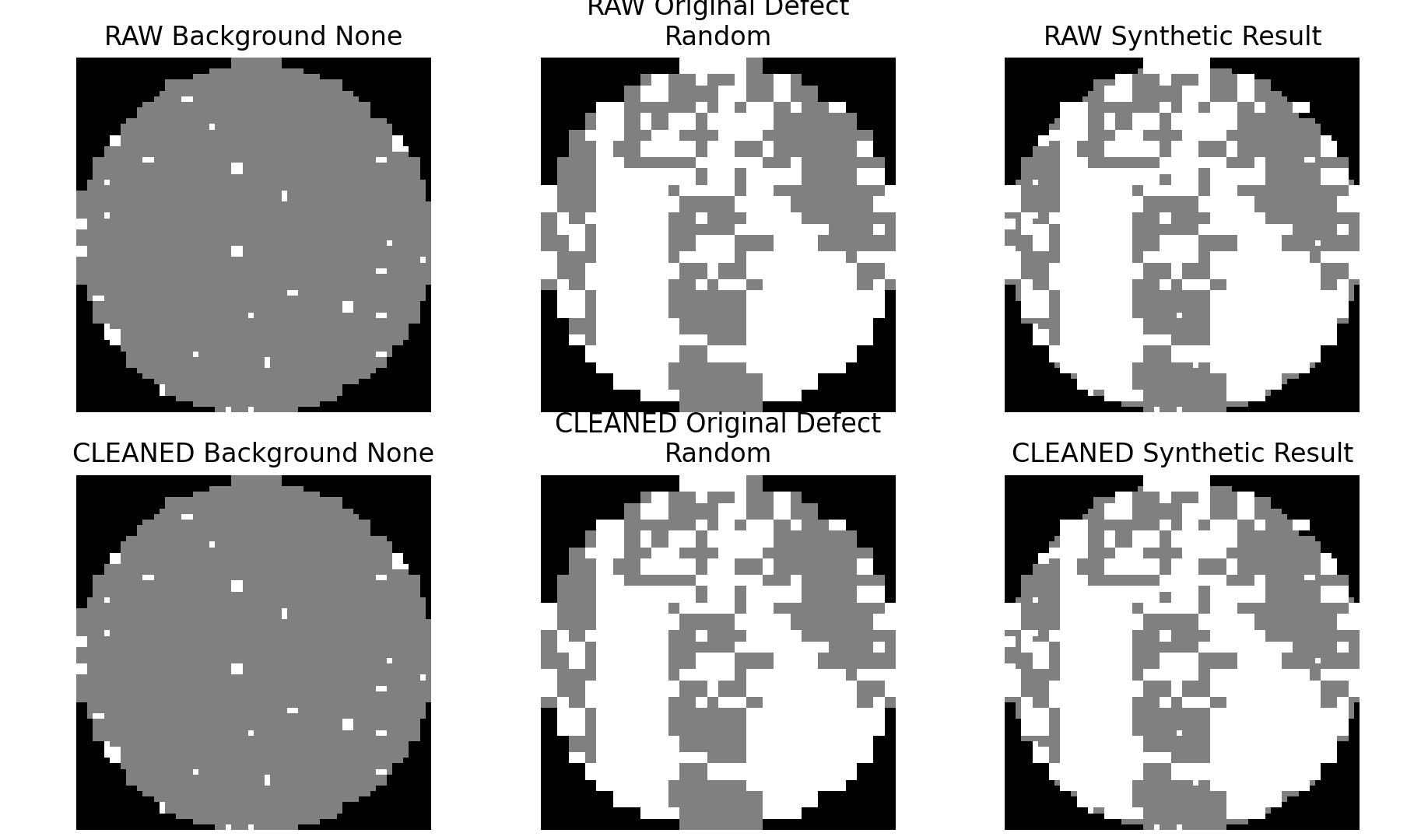

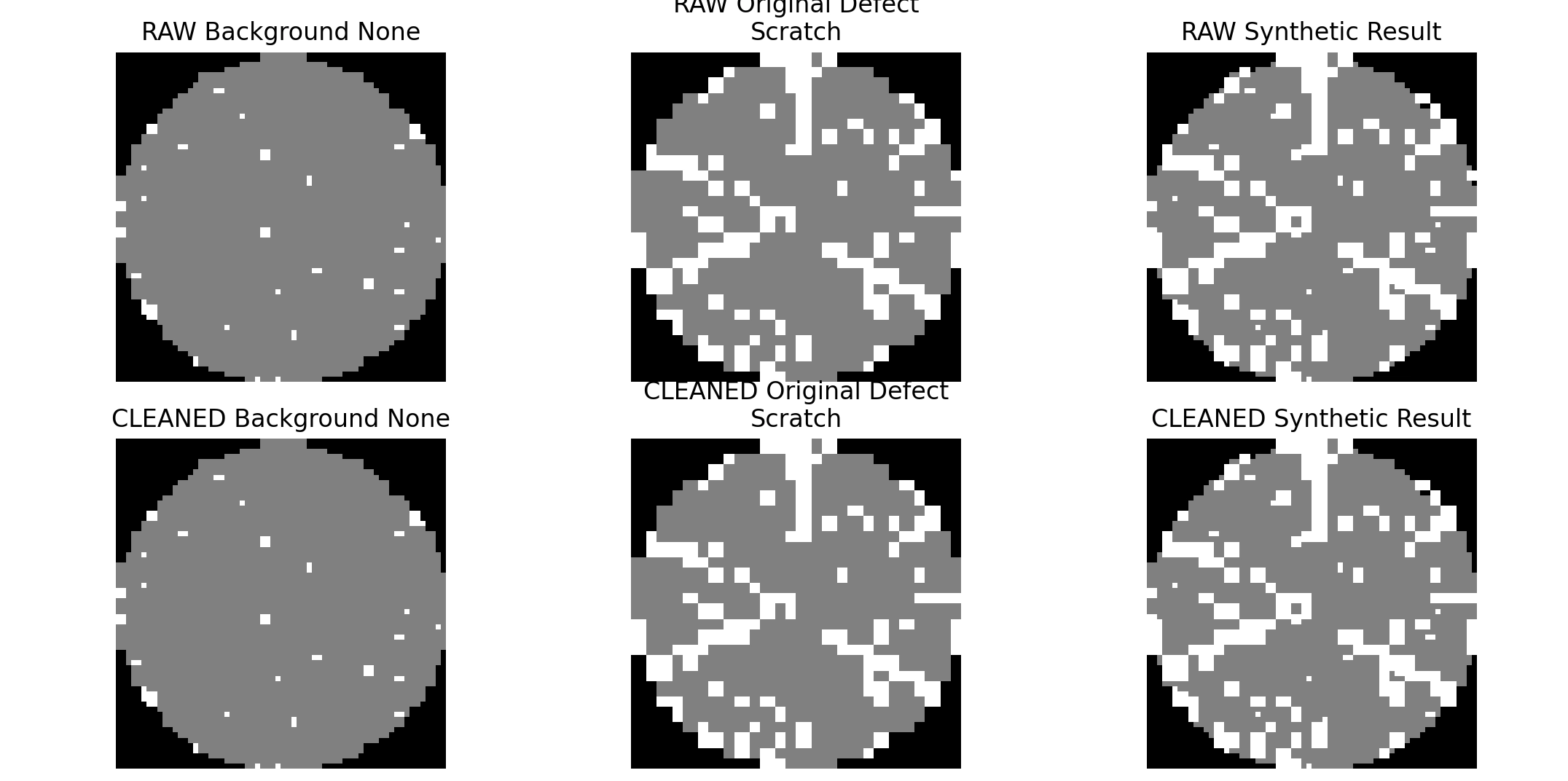

웨이퍼 결함 분류 실험을 위해 none 웨이퍼를 공통 배경으로 사용하는 합성 데이터셋 생성 과정을 진행했다. 결함 웨이퍼에서 결함 픽셀(값 2) 위치만 추출한 뒤, 이를 none 배경 위에 삽입하는 방식으로 synthetic 샘플을 생성했다. 이 과정을 통해 raw 결함 패턴과 cleaned 결함 패턴을 각각 반영한 합성 데이터셋을 구성할 수 있도록 했다.

합성 데이터셋은 fold 단위로 생성했으며, none 클래스는 2000장으로 샘플링하고 나머지 none 데이터는 background pool로 분리했다. 결함 클래스는 클래스별 목표 개수를 2000장으로 맞추어 클래스 불균형을 완화한 synthetic 데이터셋을 구축했다. 생성된 결과는 raw 버전과 cleaned 버전으로 각각 관리했다.

이후 background none, 원본 결함, 합성 결과를 나란히 시각화하며 결함 패턴이 정상적으로 반영되는지 검증했다. Edge-Loc와 Edge-Ring 등 주요 클래스에서 결함 형태가 유지된 채 합성이 잘 이루어지는 지는지.

전처리 코드도 다시 검토했다. 현재 cleaned 데이터셋은 모든 클래스를 일괄적으로 정제한 버전이 아니라, 클래스 특성에 따라 선택적으로 노이즈 제거를 적용하는 구조로 되어 있다. none, Random, Near-full은 원형을 유지하고, Edge-Ring과 Scratch는 전용 필터를 적용하며, 나머지 덩어리형 결함 클래스는 면적 기반 필터를 적용하는 방식이다. 이를 바탕으로 이번 합성 실험은 공통 none 배경 위에 raw 결함 패턴과 cleaned 결함 패턴을 각각 삽입해, 결함 패턴 정제 여부가 분류 성능에 미치는 영향을 비교하기 위한 단계로 정리했다.

향후에는 현재 구축한 분류용 데이터셋을 바탕으로 분류 성능 비교 실험을 먼저 진행하고, 이후 결함 픽셀 위치 정보를 활용해 bounding box를 생성하여 객체 탐지 데이터셋으로 확장할 계획이다.

# augment4_synthetic_cleaned.py

# cleaned 웨이퍼 데이터셋을 이용해서 fold 1~4의 synthetic cleaned 데이터셋을 만드는 코드

import pandas as pd

import numpy as np

import random

import os

SEED = 7 // seed(난수)는 7

TARGET_COUNT = 2000

random.seed(SEED)

np.random.seed(SEED)

def sample_none_class(df, target_count=2000, seed=42):

none_df = df[df['failure_str'].astype(str).str.lower() == 'none'].copy().reset_index(drop=True)

if len(none_df) < target_count:

raise ValueError(f"none 클래스 개수({len(none_df)})가 target_count({target_count})보다 적습니다.")

sampled_none = none_df.sample(n=target_count, random_state=seed)

background_pool = none_df.drop(sampled_none.index).reset_index(drop=True)

return sampled_none.reset_index(drop=True), background_pool

def synthesize_defect(background_map, defect_map):

synthetic_map = np.array(background_map, copy=True)

synthetic_map[defect_map == 2] = 2

return synthetic_map

def build_synthetic_class(defect_df, background_pool, target_count=2000, seed=42):

rng = np.random.default_rng(seed)

defect_df = defect_df.reset_index(drop=True)

if len(defect_df) == 0:

return pd.DataFrame(columns=['waferMap', 'failure_str', 'label'])

if len(background_pool) == 0:

raise ValueError("background_pool 이 비어 있습니다.")

results = []

defect_size = len(defect_df)

bg_size = len(background_pool)

for i in range(target_count):

defect_row = defect_df.iloc[i % defect_size]

bg_row = background_pool.iloc[rng.integers(0, bg_size)]

syn_map = synthesize_defect(bg_row['waferMap'], defect_row['waferMap'])

results.append({

'waferMap': syn_map,

'failure_str': defect_row['failure_str'],

'label': defect_row['label']

})

return pd.DataFrame(results)

def build_synthetic_dataset(df, target_per_class=2000, seed=42):

result_dfs = []

sampled_none, background_pool = sample_none_class(

df=df,

target_count=target_per_class,

seed=seed

)

result_dfs.append(sampled_none)

defect_df_all = df[df['failure_str'].astype(str).str.lower() != 'none'].copy()

defect_classes = sorted(defect_df_all['failure_str'].dropna().unique())

for cls in defect_classes:

cls_df = defect_df_all[defect_df_all['failure_str'] == cls].copy().reset_index(drop=True)

synthetic_cls_df = build_synthetic_class(

defect_df=cls_df,

background_pool=background_pool,

target_count=target_per_class,

seed=seed

)

result_dfs.append(synthetic_cls_df)

print(f"[CLEANED] {cls}: original={len(cls_df)}, synthetic={len(synthetic_cls_df)}")

final_df = pd.concat(result_dfs, ignore_index=True)

return final_df, background_pool

if __name__ == "__main__":

ROOT_DIR = r"C:\Users\eowkd\PBL2"

for i in range(1, 5):

input_path = os.path.join(ROOT_DIR, f"dataset_fold_{i}_cleaned.pkl")

output_path = os.path.join(ROOT_DIR, f"dataset_fold_{i}_synthetic_cleaned.pkl")

bg_output_path = os.path.join(ROOT_DIR, f"background_pool_fold_{i}_cleaned.pkl")

if not os.path.exists(input_path):

print(f"[CLEANED] fold {i} 입력 파일 없음: {input_path}")

continue

print("\n" + "=" * 60)

print(f"[CLEANED] fold {i} 처리 시작")

df = pd.read_pickle(input_path)

print("입력 파일:", input_path)

print("원본 shape:", df.shape)

print(df["failure_str"].value_counts())

final_df, background_pool = build_synthetic_dataset(

df=df,

target_per_class=TARGET_COUNT,

seed=SEED

)

final_df.to_pickle(output_path)

background_pool.to_pickle(bg_output_path)

print(f"[CLEANED] 저장 완료: {output_path}")

print(f"[CLEANED] background pool 저장 완료: {bg_output_path}")

print("[CLEANED] 최종 synthetic 분포:")

print(final_df["failure_str"].value_counts())

# augment4_synthetic_raw.py

# cleaned 웨이퍼 데이터셋을 이용해서 fold 1~4의 synthetic cleaned 데이터셋을 만드는 코드

import pandas as pd

import numpy as np

import random

import os

SEED = 7

TARGET_COUNT = 2000

random.seed(SEED)

np.random.seed(SEED)

def sample_none_class(df, target_count=2000, seed=42):

none_df = df[df['failure_str'].astype(str).str.lower() == 'none'].copy().reset_index(drop=True)

if len(none_df) < target_count:

raise ValueError(f"none 클래스 개수({len(none_df)})가 target_count({target_count})보다 적습니다.")

sampled_none = none_df.sample(n=target_count, random_state=seed)

background_pool = none_df.drop(sampled_none.index).reset_index(drop=True)

return sampled_none.reset_index(drop=True), background_pool

def synthesize_defect(background_map, defect_map):

synthetic_map = np.array(background_map, copy=True)

synthetic_map[defect_map == 2] = 2

return synthetic_map

def build_synthetic_class(defect_df, background_pool, target_count=2000, seed=42):

rng = np.random.default_rng(seed)

defect_df = defect_df.reset_index(drop=True)

if len(defect_df) == 0:

return pd.DataFrame(columns=['waferMap', 'failure_str', 'label'])

if len(background_pool) == 0:

raise ValueError("background_pool 이 비어 있습니다.")

results = []

defect_size = len(defect_df)

bg_size = len(background_pool)

for i in range(target_count):

defect_row = defect_df.iloc[i % defect_size]

bg_row = background_pool.iloc[rng.integers(0, bg_size)]

syn_map = synthesize_defect(bg_row['waferMap'], defect_row['waferMap'])

results.append({

'waferMap': syn_map,

'failure_str': defect_row['failure_str'],

'label': defect_row['label']

})

return pd.DataFrame(results)

def build_synthetic_dataset(df, target_per_class=2000, seed=42):

result_dfs = []

sampled_none, background_pool = sample_none_class(

df=df,

target_count=target_per_class,

seed=seed

)

result_dfs.append(sampled_none)

defect_df_all = df[df['failure_str'].astype(str).str.lower() != 'none'].copy()

defect_classes = sorted(defect_df_all['failure_str'].dropna().unique())

for cls in defect_classes:

cls_df = defect_df_all[defect_df_all['failure_str'] == cls].copy().reset_index(drop=True)

synthetic_cls_df = build_synthetic_class(

defect_df=cls_df,

background_pool=background_pool,

target_count=target_per_class,

seed=seed

)

result_dfs.append(synthetic_cls_df)

print(f"[RAW] {cls}: original={len(cls_df)}, synthetic={len(synthetic_cls_df)}")

final_df = pd.concat(result_dfs, ignore_index=True)

return final_df, background_pool

if __name__ == "__main__":

ROOT_DIR = r"C:\Users\eowkd\PBL2"

for i in range(1, 5):

input_path = os.path.join(ROOT_DIR, f"dataset_fold_{i}.pkl")

output_path = os.path.join(ROOT_DIR, f"dataset_fold_{i}_synthetic_raw.pkl")

bg_output_path = os.path.join(ROOT_DIR, f"background_pool_fold_{i}_raw.pkl")

if not os.path.exists(input_path):

print(f"[RAW] fold {i} 입력 파일 없음: {input_path}")

continue

print("\n" + "=" * 60)

print(f"[RAW] fold {i} 처리 시작")

df = pd.read_pickle(input_path)

print("입력 파일:", input_path)

print("원본 shape:", df.shape)

print(df["failure_str"].value_counts())

final_df, background_pool = build_synthetic_dataset(

df=df,

target_per_class=TARGET_COUNT,

seed=SEED

)

final_df.to_pickle(output_path)

background_pool.to_pickle(bg_output_path)

print(f"[RAW] 저장 완료: {output_path}")

print(f"[RAW] background pool 저장 완료: {bg_output_path}")

print("[RAW] 최종 synthetic 분포:")

print(final_df["failure_str"].value_counts())

# 시각화 코드

# 원본 결함 / none 배경 / 합성 결과 비교 / raw vs cleaned 비교 /synthetic raw vs synthetic cleaned 비교

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import os

ROOT_DIR = r"C:\Users\eowkd\PBL2"

FOLD = 1

SEED = 7

def synthesize_defect(background_map, defect_map):

synthetic_map = np.array(background_map, copy=True)

synthetic_map[defect_map == 2] = 2

return synthetic_map

def load_data(fold=1):

data = {

"raw": pd.read_pickle(os.path.join(ROOT_DIR, f"dataset_fold_{fold}.pkl")),

"cleaned": pd.read_pickle(os.path.join(ROOT_DIR, f"dataset_fold_{fold}_cleaned.pkl")),

"synthetic_raw": pd.read_pickle(os.path.join(ROOT_DIR, f"dataset_fold_{fold}_synthetic_raw.pkl")),

"synthetic_cleaned": pd.read_pickle(os.path.join(ROOT_DIR, f"dataset_fold_{fold}_synthetic_cleaned.pkl")),

}

return data

def get_none_and_defect(df, class_name):

none_df = df[df["failure_str"].astype(str).str.lower() == "none"].copy().reset_index(drop=True)

defect_df = df[df["failure_str"] == class_name].copy().reset_index(drop=True)

return none_df, defect_df

def show_raw_cleaned_synthesis(raw_df, cleaned_df, class_name, sample_idx=0):

raw_none, raw_defect = get_none_and_defect(raw_df, class_name)

cleaned_none, cleaned_defect = get_none_and_defect(cleaned_df, class_name)

if len(raw_defect) == 0 or len(cleaned_defect) == 0:

print(f"{class_name} 클래스가 없습니다.")

return

raw_bg = raw_none.sample(n=1, random_state=SEED).iloc[0]["waferMap"]

cleaned_bg = cleaned_none.sample(n=1, random_state=SEED).iloc[0]["waferMap"]

raw_def = raw_defect.iloc[sample_idx % len(raw_defect)]["waferMap"]

cleaned_def = cleaned_defect.iloc[sample_idx % len(cleaned_defect)]["waferMap"]

raw_syn = synthesize_defect(raw_bg, raw_def)

cleaned_syn = synthesize_defect(cleaned_bg, cleaned_def)

plt.figure(figsize=(14, 8))

plt.subplot(2, 3, 1)

plt.imshow(raw_bg, cmap="gray")

plt.title("RAW Background none")

plt.axis("off")

plt.subplot(2, 3, 2)

plt.imshow(raw_def, cmap="gray")

plt.title(f"RAW Original Defect\n{class_name}")

plt.axis("off")

plt.subplot(2, 3, 3)

plt.imshow(raw_syn, cmap="gray")

plt.title("RAW Synthetic Result")

plt.axis("off")

plt.subplot(2, 3, 4)

plt.imshow(cleaned_bg, cmap="gray")

plt.title("CLEANED Background none")

plt.axis("off")

plt.subplot(2, 3, 5)

plt.imshow(cleaned_def, cmap="gray")

plt.title(f"CLEANED Original Defect\n{class_name}")

plt.axis("off")

plt.subplot(2, 3, 6)

plt.imshow(cleaned_syn, cmap="gray")

plt.title("CLEANED Synthetic Result")

plt.axis("off")

plt.tight_layout()

plt.show()

def show_synthetic_dataset_samples(syn_raw_df, syn_cleaned_df, class_name, n=5):

raw_cls = syn_raw_df[syn_raw_df["failure_str"] == class_name].reset_index(drop=True)

cleaned_cls = syn_cleaned_df[syn_cleaned_df["failure_str"] == class_name].reset_index(drop=True)

if len(raw_cls) == 0 or len(cleaned_cls) == 0:

print(f"{class_name} 클래스가 synthetic 데이터셋에 없습니다.")

return

n = min(n, len(raw_cls), len(cleaned_cls))

plt.figure(figsize=(3 * n, 6))

for i in range(n):

plt.subplot(2, n, i + 1)

plt.imshow(raw_cls.iloc[i]["waferMap"], cmap="gray")

plt.title(f"SYN RAW\n{class_name}\nidx={i}")

plt.axis("off")

plt.subplot(2, n, n + i + 1)

plt.imshow(cleaned_cls.iloc[i]["waferMap"], cmap="gray")

plt.title(f"SYN CLEANED\n{class_name}\nidx={i}")

plt.axis("off")

plt.tight_layout()

plt.show()

def show_raw_vs_cleaned_original(raw_df, cleaned_df, class_name, n=5):

raw_cls = raw_df[raw_df["failure_str"] == class_name].reset_index(drop=True)

cleaned_cls = cleaned_df[cleaned_df["failure_str"] == class_name].reset_index(drop=True)

if len(raw_cls) == 0 or len(cleaned_cls) == 0:

print(f"{class_name} 클래스가 없습니다.")

return

n = min(n, len(raw_cls), len(cleaned_cls))

plt.figure(figsize=(3 * n, 6))

for i in range(n):

plt.subplot(2, n, i + 1)

plt.imshow(raw_cls.iloc[i]["waferMap"], cmap="gray")

plt.title(f"RAW\n{class_name}\nidx={i}")

plt.axis("off")

plt.subplot(2, n, n + i + 1)

plt.imshow(cleaned_cls.iloc[i]["waferMap"], cmap="gray")

plt.title(f"CLEANED\n{class_name}\nidx={i}")

plt.axis("off")

plt.tight_layout()

plt.show()

if __name__ == "__main__":

data = load_data(FOLD)

raw_df = data["raw"]

cleaned_df = data["cleaned"]

syn_raw_df = data["synthetic_raw"]

syn_cleaned_df = data["synthetic_cleaned"]

print(f"FOLD = {FOLD}, SEED = {SEED}")

classes_to_check = [

"Center",

"Donut",

"Edge-Loc",

"Edge-Ring",

"Loc",

"Near-full",

"Random",

"Scratch",

]

# 1. raw/cleaned 배경+결함+합성 한 번에 비교

for cls in classes_to_check:

show_raw_cleaned_synthesis(raw_df, cleaned_df, cls, sample_idx=0)

'''

# 2. 원본 raw vs cleaned만 여러 장 비교

for cls in classes_to_check:

show_raw_vs_cleaned_original(raw_df, cleaned_df, cls, n=5)

# 3. synthetic raw vs synthetic cleaned 여러 장 비교

for cls in classes_to_check:

show_synthetic_dataset_samples(syn_raw_df, syn_cleaned_df, cls, n=5)

'''

결과

-> fold 1-4 결함합성증강 데이터셋 저장완료

근데 스크래치는 클린 버전에서도 노이즈 제거가 잘.. 안된 거 같다.